I manually tested the first approach and seems like it did solve the problem (for now?). decreasing the connection timeout so that stale connections are terminated as soon as possible.increasing _conntrack_max value based on this formula CONNTRACK_MAX = RAMSIZE (in bytes)/16384/(OS-architecture bit)/32 (e.g for server with 16GB RAM running 64-bit OS, formula will be CONNTRACK_MAX = 16 * 1024 * 1024 * 1024 / 16384 / 64 / 2).I did some research and seems like there’s 2 approaches to this problem: Implementing a fix to this conntrack exhaustion problem

Iptables mode is where conntrack comes into play.įrom the previous section, we know that iptables works by interacting with packet filtering hooks provided by netfilter to determine what to do with packets belonging to each connection. There’s primarily two ways (excluding CNIs) this is implemented: This means load can be distributed across the pods via a single DNS name. Kubernetes uses Service as an abstract way of exposing application running on pods. It allows the kernel to track all network connections (protocol, source IP, source port, destination IP, destination port, connection state) on a table, thereby granting the kernel the ability to identify all packets which make up each connection and handle these connections consistently ( iptables rules). What is conntrack?Ĭonntrack (aka “connection tracking”) is a core feature of Linux kernel’s networking stack and is built on top of the netfilter framework. Some time spent investigating later, I identified ingress-nginx-controller pod (using container_sockets metric) as the process occupying much of the conntrack table, which is somewhat expected since it is the entrypoint to our cluster. Oddly, it’s only affecting 1 out of 6 worker nodes. This is worrying since it is potentially affecting the user experience of our tenants accessing our services. nf_conntrack: nf_conntrack: table full, dropping packet

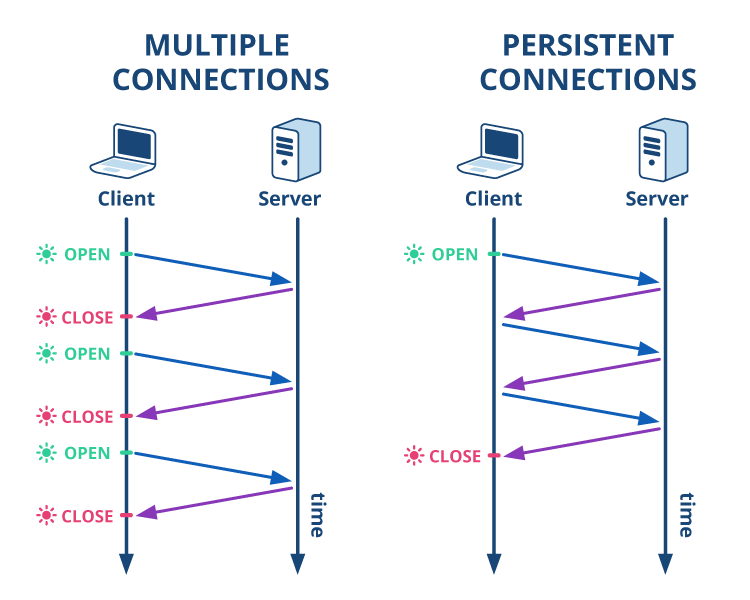

You end up in a situation where the firewall consumes far more resources than the sever itself.Nf_conntrack: nf_conntrack: table full, dropping packet Unfortunately as you have discovered this sucks for stateless UDP servers serving large numbers of small requests. In principle it would be possible to build a NAT box that used a stateless approach for port forwards while maintaining a stateful approach for outgoing connections but it's simpler to just use stateful NAT for everything and it sounds like this is what your vendor is doing. So it has to wait for a timeout before removing the entry from it's state tracking tables. Unfortunately the firewall or NAT has no way of knowing when the client has finished talking to the server. This allows rules like "outgoing connections only" to be applied to UDP and allows reverse translations to be applied to response packets. Stateful firewalls and NATs therefore assume that packets with a given combination of source IP/source port/Destination IP/Destination port and the corresponding combination with source and destination swapped form part of a "connection". While there is no formal "connection" with UDP there is still a convention that clients send requests and expect to get responses back with the source IP and port swapped with the Destinatoin IP and port.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed